There are other solutions out there, I found two different python script collections that were said to do the same thing but the interfaces for both sucked and at least one of them had insane requirements like installing a fully functioning messaging system (activemq) to work! Needless to say, I thought the same thing could be accomplished with less requirements and more ease of use. So I've built out my own solution and released it on Google code. It uses Pyro4 for RMI functionality and messaging, and it uses pyftpdlib to move files back and forth over the network. (This may be less efficient than using network shares, yes, but I figured this would make it a little more flexible.)

Note: As mentioned above, this was made in only a week, so please excuse any bugs or rough edges, I'm still working on it! That said, please reply with any feature requests or bugs you find, it would be most appreciated.

To use this script set you only need five things, none of which should be too unreasonable:

- Python 2.7 (python.org)

- Pyro4 (Can be gotten from pypi)

- pyftpdlib (Can be gotten from pypi)

- wxPython (wxpython.org)

- Handbrake CLI 0.9.5 (handbrake.fr)

Note: You can probably get away with an older version of Handbrake, assuming the CLI interface has not changed significantly. On that note you can probably get away with using older versions of Python and wxPython as well. (At least back to Python 2.6, I'd imagine.)

This has been tested on Windows 7 (64 and 32 bit) and on Fedora Core 14 (32 bit), but I imagine it should work where ever you've got Python 2.7 and wxPython available. (i.e. BSD, MacOS, etc)

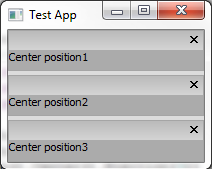

Below is a screenshot of the UI in action:

|

| You can see from the above that there are three encoders at work, and they'll continue to grab tasks from the central server until all the work is done. You can find the scripts and a wiki about how to get things running at: http://code.google.com/p/clustered-handbrake/ |